The High Bandwidth Memory (HBM) market is experiencing a profound supply-demand imbalance in 2026, driven by explosive AI infrastructure needs, positioning investors in key memory makers like SK Hynix, Samsung, and Micron for potential gains amid a semiconductor supercycle.

1)

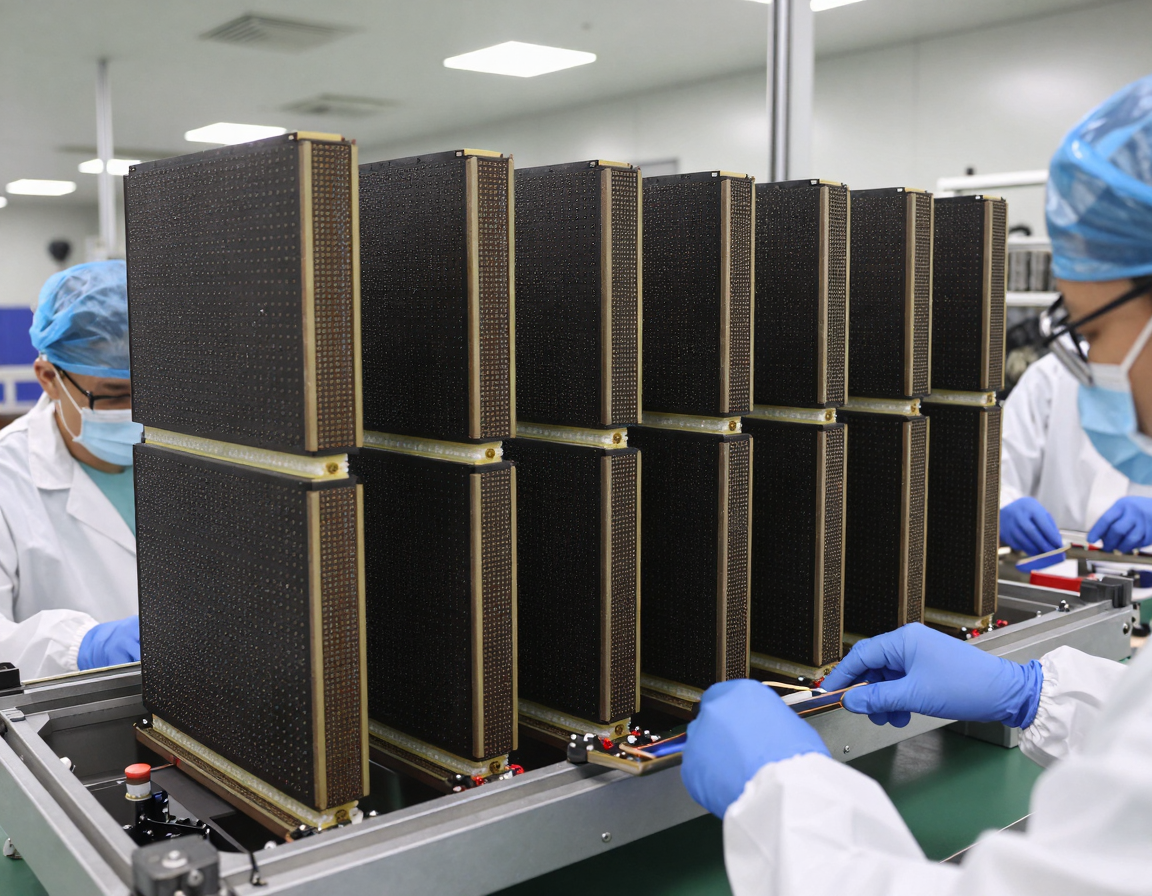

High Bandwidth Memory (HBM) is a premium DRAM variant essential for AI accelerators, featuring vertically stacked memory dies connected via through-silicon vias for superior speed and bandwidth over conventional DRAM. Production is limited to Samsung Electronics, SK Hynix, and Micron Technology, with HBM requiring significantly more wafer capacity per bit, displacing standard DRAM output.

AI systems demand massive HBM volumes per GPU cluster, as model complexity drives proportional memory needs, transforming industry dynamics from cyclical to structurally tight. By 2026, HBM is projected to consume about 25% of total DRAM wafer production, with year-on-year demand growth around 70%.

2)

AI infrastructure expansion is the core driver, with hyperscalers like Microsoft, Google, Meta, and Amazon securing multi-year HBM supply deals, leaving 2026 production sold out. Server memory intensity is rising, with DRAM and HBM per server increasing alongside enterprise SSD demand.

Global semiconductor market growth exceeds 25% to $975 billion in 2026, led by memory at 30% expansion, potentially surpassing $440 billion. HBM market alone hits $54.6 billion, up 58% year-over-year, fueled by 82% surge in ASIC-based AI chips.

3)

Supply growth lags critically: DRAM at 16% and NAND at 17% year-on-year, below historical norms, as capacity pivots to high-margin HBM. Every HBM wafer denies output for PCs, smartphones, and legacy servers, squeezing conventional DRAM.

HBM4 advancements, like SK Hynix’s 16-layer 48GB device at CES 2026 delivering over 2 TB/s, further strain resources. DDR4/DDR5 tightness persists into 2027 due to AI prioritization.

4)

Prices reflect the imbalance: DRAM up 50-55% this quarter vs. Q4 2025, with HBM average selling prices (ASPs) rising 8% at Samsung, 1% at SK Hynix, and 22% at Micron in 2026. Overall DRAM revenue surges 51%, NAND 45%, with ASPs up 33% and 26%.

Bank of America dubs 2026 a ‘supercycle’ akin to the 1990s boom, naming SK Hynix top pick. Legacy DRAM shortages push enterprise IT costs higher amid data center pressures.

5)

SK Hynix dominates HBM with 62% shipment share in Q2 2025 and 57% revenue in Q3, projected over 50% through 2026 in HBM3/HBM3E. HBM3E remains the 2026 standard for AI servers, transitioning to HBM4.

Samsung and Micron follow, prioritizing HBM for profitability, with forward reservations locking in AI revenue. This shift improves general DRAM balance indirectly via focused investments.

6)

For U.S. investors, Micron (NASDAQ: MU) offers direct exposure, benefiting from 22% HBM ASP growth and U.S.-based production advantages. Korean giants SK Hynix (KRX: 000660) and Samsung (KRX: 005930) trade via ADRs or ETFs, capturing the supercycle core.

Nvidia and AMD reliance on HBM underscores supplier leverage, with memory comprising rising AI system costs. Institutional forecasts highlight server DDR5 and HBM as DRAM pillars.

How to Apply This in Practice

Practical Checklist for U.S. Chip Investors:

1. Assess portfolio exposure to HBM leaders: Allocate 10-20% to Micron (MU), consider iShares Semiconductor ETF (SOXX) for diversified play including Samsung/SK Hynix proxies.

2. Monitor quarterly earnings: Watch HBM revenue mix, ASP trends, and capacity utilization from Samsung, SK Hynix, Micron reports.

3. Track AI capex: Follow hyperscaler guidance from Nvidia, AMD, and cloud providers for HBM demand signals.

4. Evaluate valuations: Target stocks with HBM market share >20%, forward P/E under 25x amid 50%+ revenue growth forecasts.

5. Diversify risks: Balance with NAND/SSD plays, hedge via broad tech indices if overexposed to memory volatility.

6. Review supply updates: Subscribe to WSTS, Counterpoint Research for wafer allocation shifts.

Implement via low-cost brokers, set alerts for 2026 HBM sell-out confirmations.

Risk Note

While HBM tightness favors suppliers, risks include delayed AI capex, faster capacity ramps, geopolitical tensions in Asia, or economic slowdowns curbing data center builds; conventional DRAM gluts could pressure margins if AI demand falters. Volatility remains high in semis; consult advisors, invest only disposable capital.